Description

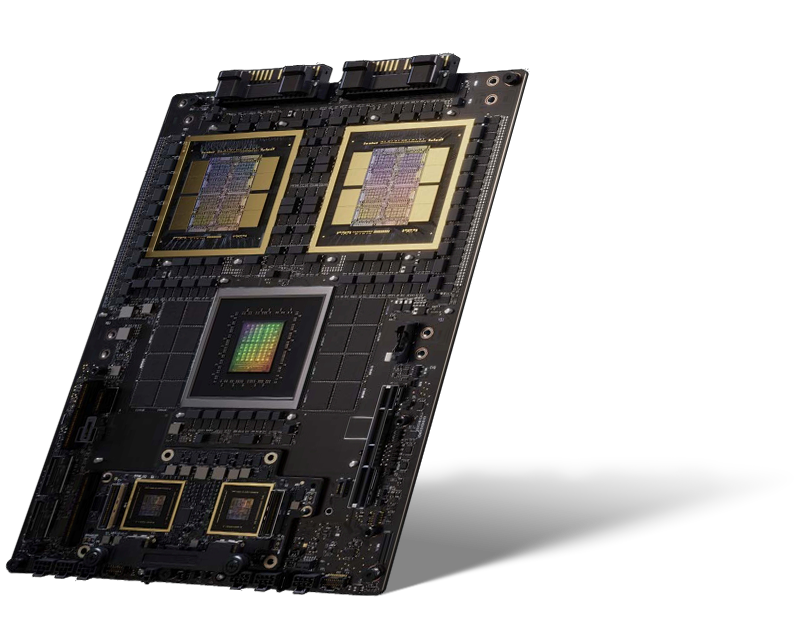

The NVIDIA GB300 NVL72 is a rack-scale system optimized to accelerate large AI model training, fine-tuning, and real-time inferencing for AI models over 1 trillion parameters. This architecture is comprised of 36 NVIDIA Grace ARM processors and 72 NVIDIA Blackwell Ultra GPUs all interconnected with NVIDIA’s 5th-gen NVLink technology. The rack is powered by a set of power shelves through a bus bar and the compute trays are cooled with cold plates on the CPUs and GPUs. The HPE offering incorporates direct-liquid cooling technologies from HPE. NVIDIA GB300 NVL72 by HPE offers a complete, scalable solution for AI and HPC customers everywhere. NVIDIA GB300 NVL72 by HPE provides maximum performance for Large Model AI Training and Deep Learning. Built with high-speed networking technologies, integrated storage, a robust software portfolio and management tools, NVIDIA GB300 NVL72 by HPE systems enable customers to innovate and prepare for tomorrow’s challenges.

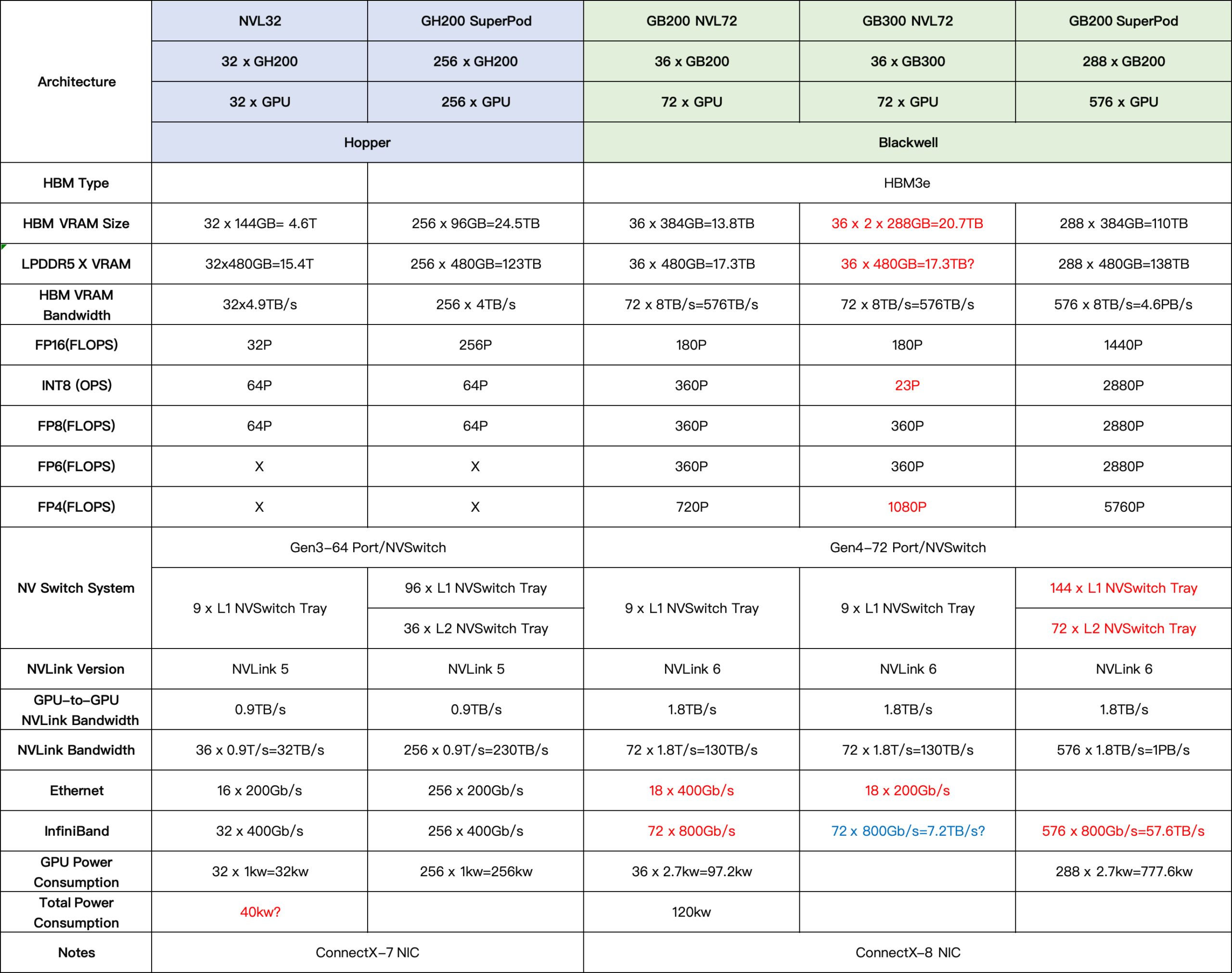

NVIDIA’s new GB300 NVL72 AI server connects 72 x GB300 Blackwell Ultra AI GPUs and 36 Arm Neoverse-based NVIDIA Grace CPUs in a rack-scale design, acting as a single gigantic GPU built for test-time scaling. NVIDIA’s new GB300 NVL72 has AI models accessing the platform’s massive performance gains to explore different solutions to problems and break down complex requests into multiple steps, resulting in higher-quality responses.

The GB300 NVL72 maximizes overall AI factory performance by addressing key bottlenecks in AI computing. At the chip-level, the densely co-packaged HBM3e increases memory capacity to 288GB per GPU. Each of the 72 GPUs per rack is connected with 1.8TB/s NVLink to form a massive pool of 21TB HBM3e, an unprecedented capacity to store massive AI models within the fastest regions of the memory hierarchy. Cluster-level speeds are doubled via the GB300 NVL72’s integrated NVIDIA ConnectX®-8 NICs, with up to 800Gb/s throughput across the compute fabric. The GB300 NVL72 offers dramatic speedups for AI training applications with elevated memory requirements.

NVIDIA GB300 NVL72: Built for the Age of AI Reasoning

The NVIDIA GB300 NVL72 features a fully liquid-cooled, rack-scale design that unifies 72 NVIDIA Blackwell Ultra GPUs and 36 Arm ®-based NVIDIA Grace™ CPUs in a single platform optimized for test-time scaling inference. AI factories powered with the GB300 NVL72 using NVIDIA Quantum-X800 InfiniBand or Spectrum™-X Ethernet paired with ConnectX ®-8 SuperNIC™ provide a 50x higher output for reasoning model inference compared to the NVIDIA Hopper™ platform.

dgx-scale-ai-infrastructure-datasheet-gtc25-dgx-gb300-3725800-US-NVIDIA-web